I’m a Ph.D. student at Singapore Management University, working on human-computer interaction with Prof. Li Jiannan as part of the SMU-HCI community. I completed my Master of Computing at National University of Singapore working with Prof. Zhao Shengdong and Prof. Tony Tang. BSc (Hons) in Computing Science from University of Glasgow under Prof. Jeannie Lee’s supervision.

My research asks what we lose when AI does too much. I study human–AI interaction in learning and creative contexts — exploring how to make AI more expressive and capable, but not at the expense of the human efforts worth protecting. My work examines how teaching, annotating, and explaining shape cognition and collaboration, and what happens to those processes when AI absorbs them. I build systems, run studies, and develop theoretical frameworks to argue for a more selective AI — one that knows when to step back.

in progress

Working on when optional help becomes default in learning tools — designs that keep struggle, reflection, and human teaching moves legible.

Exploring annotation and explanation as places where human effort should stay visible as models get more capable.

Prototyping creative tools for children’s exploration — play, tinkering, and open-ended making — with a lighter-touch AI default so curiosity and agency stay in the foreground.

publications

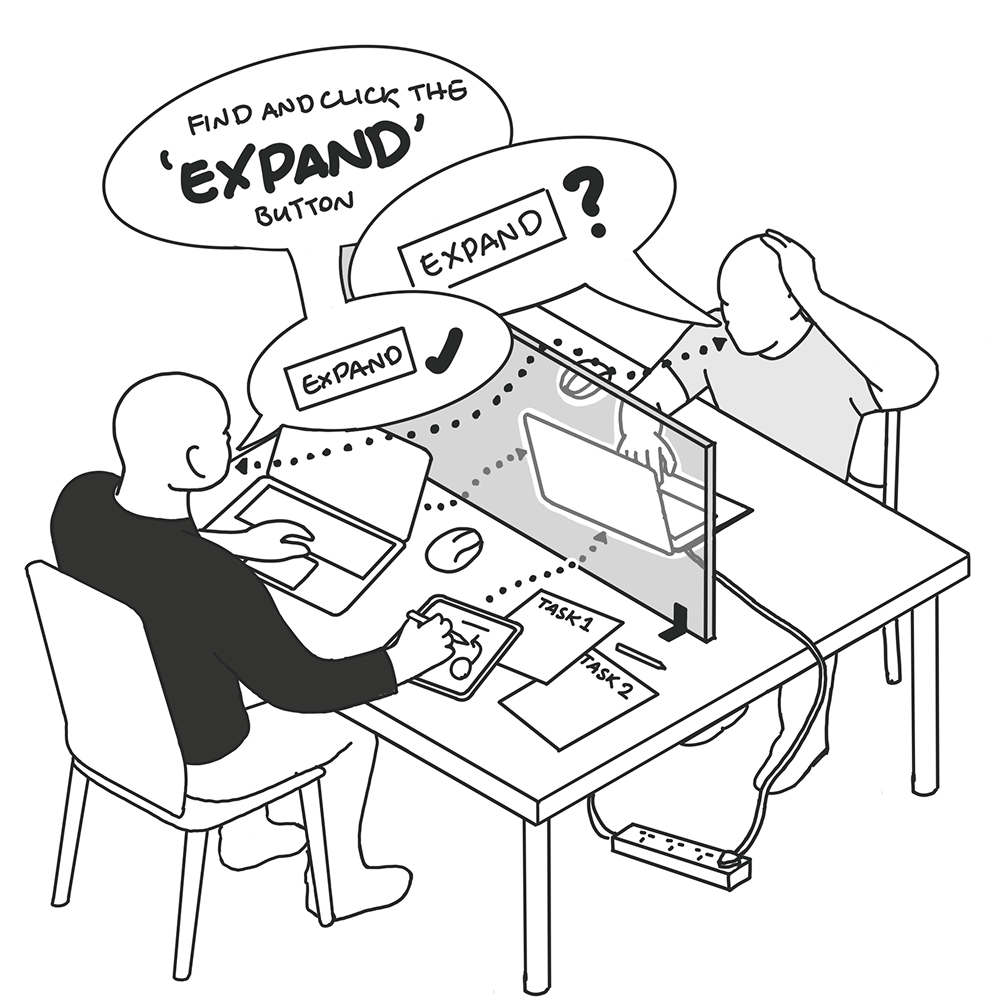

In an observational study of expert–novice graphic design tutoring, speech, annotations, and remote control each trade precision for learner agency and sense of control over the shared workspace. Teachers mix modalities adaptively, supporting contiguity principles while motivating precision–agency trade-offs and digital territoriality as design constraints for adaptive software guidance.

position, workshop, and poster papers

We borrow the idea of desire paths to reframe learner deviation in software training as informative signal—not error to stamp out—about needs, prior knowledge, and curriculum fit. Evidence from human Figma tutors shows experts already negotiate paths by shifting speech, annotation, and remote control rather than enforcing one rigid route.

We flip the usual question from how to ship GenAI tutors to what role they should play given how effective human teachers withhold intrusive help to protect agency. Grounded in observational Figma tutoring, we argue restraint and friction can be virtues and outline evaluation and design implications for AI systems in education.

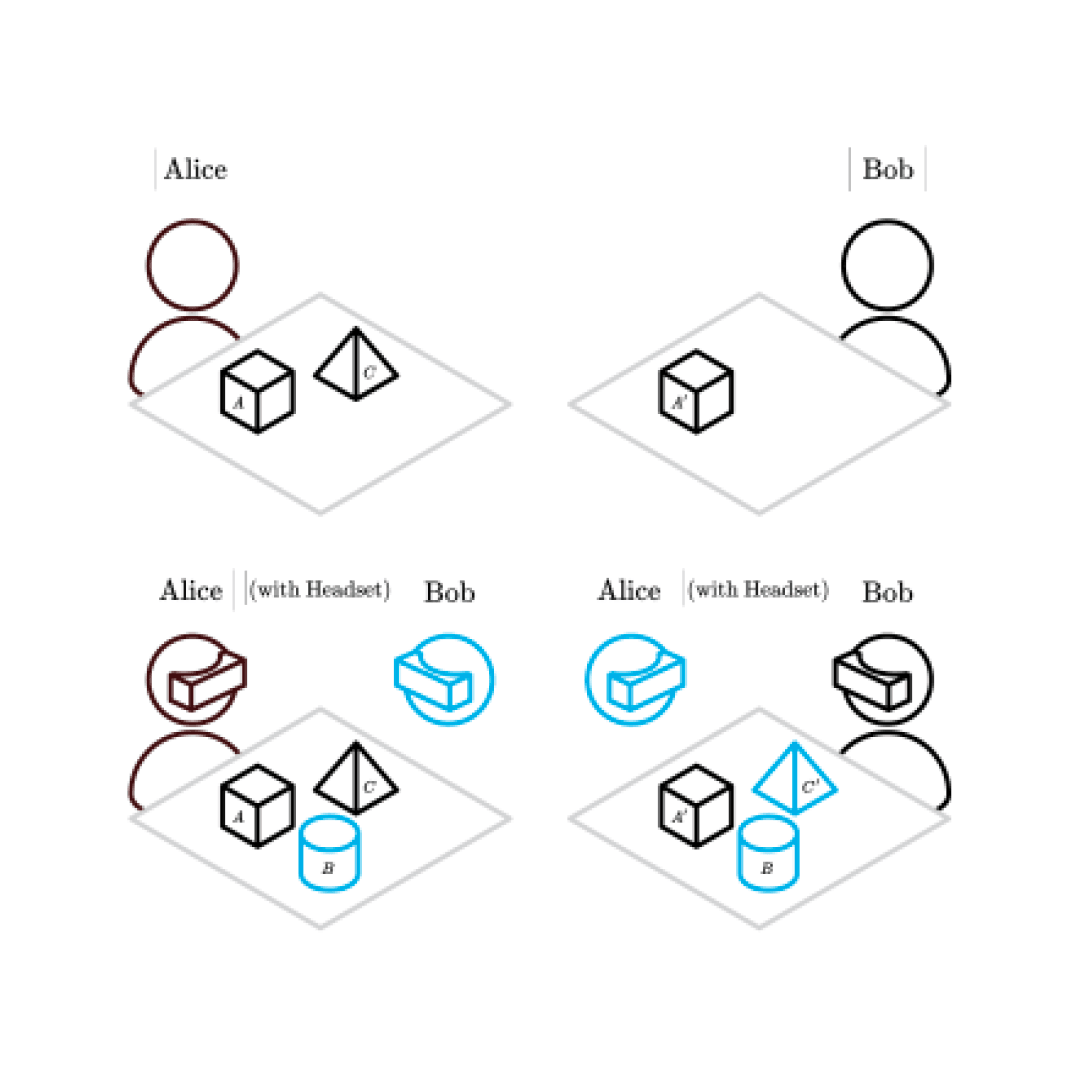

Cross-reality remote collaboration often yields partially replicated workspaces where artefacts drift out of sync across sites and collaborators lose mutual visibility of manipulations. We outline artefact awareness support so teams retain shared understanding of objects and changes despite asymmetric physical access.