'Desire Paths' in AI-Guided Software Learning

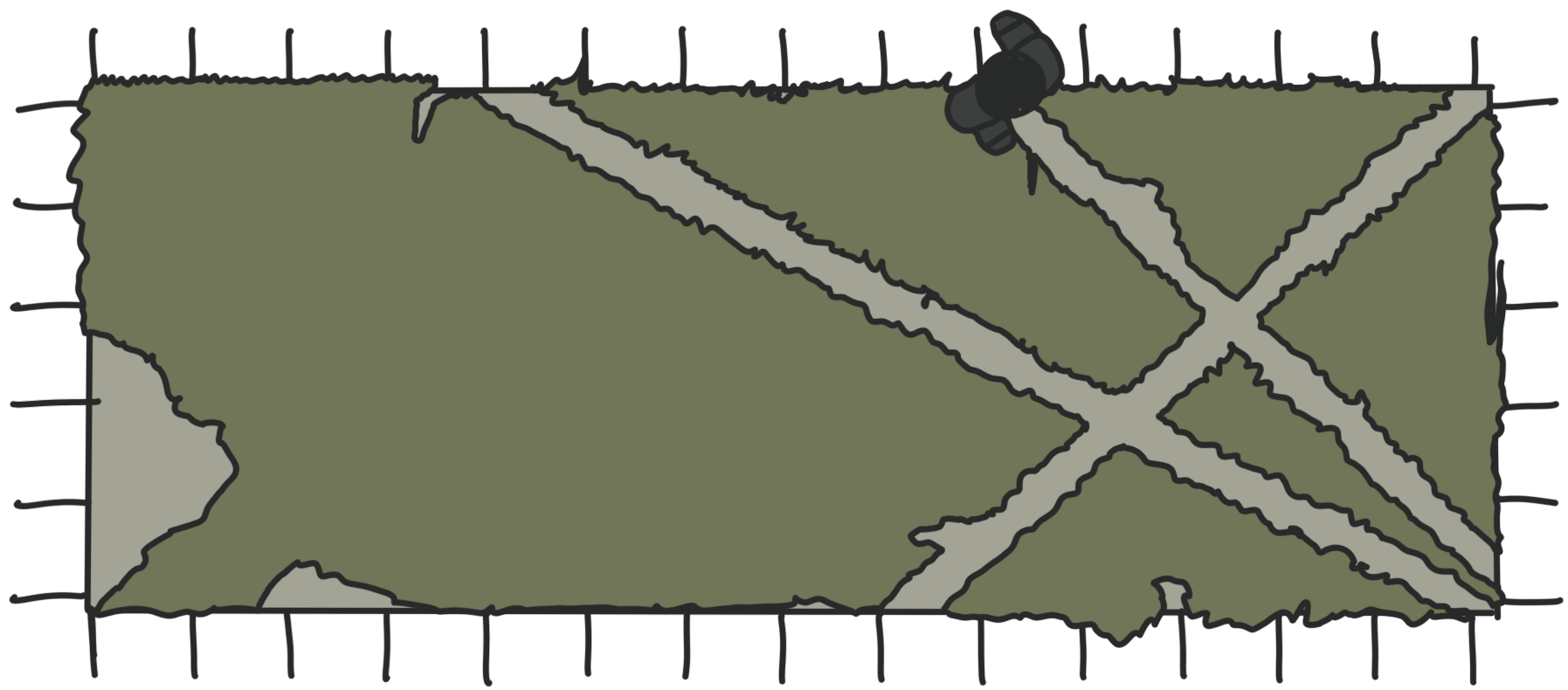

Learners who deviate from prescribed instructional paths are not necessarily failing—they may be revealing signal about themselves, the curriculum, and the interface. Yet AI tutoring agents for software learning typically treat deviation as error to be corrected. We argue this framing is fundamentally misaligned with how learning actually unfolds. Drawing on the urban planning concept of desire paths—informal trails that emerge when pedestrians route around formal walkways—we reframe learner deviations as meaningful signals of unmet needs, prior knowledge, and curriculum gaps. We ground this argument in an empirical study of human software tutors teaching Figma, which reveals that expert tutors already read and respond to desire paths by fluidly shifting across instructional modalities—speech, visual annotation, and remote screen control—in response to learner behavior. We offer a preliminary taxonomy of deviation types, argue that the Human↔Agent↔UI triangle in learning contexts must be redesigned around negotiated path-finding rather than path enforcement, and argue that the field needs frameworks and empirical study of adaptive multimodal intervention design for tutoring systems to make this negotiation possible.

This paper had many concerns that were not included in the study.