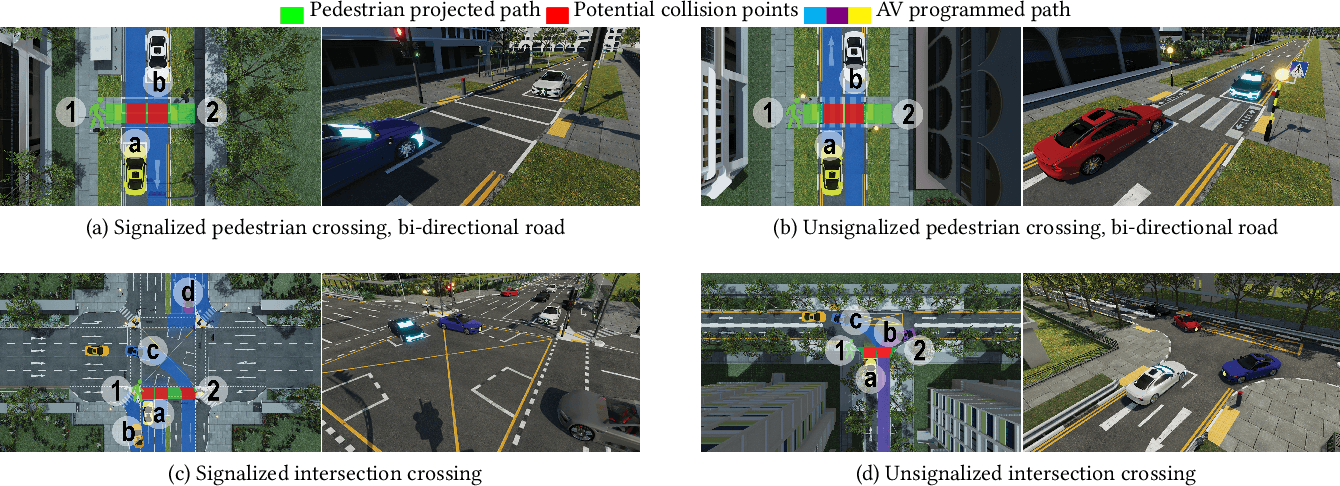

IntVRsection is a VR environment for pedestrian-focused studies at signalized and unsignalized intersections at roughly life scale with surrounding traffic. It enables safer, repeatable evaluations of autonomous-vehicle behaviors and external HMIs than simpler desktop or abstract scenarios alone.

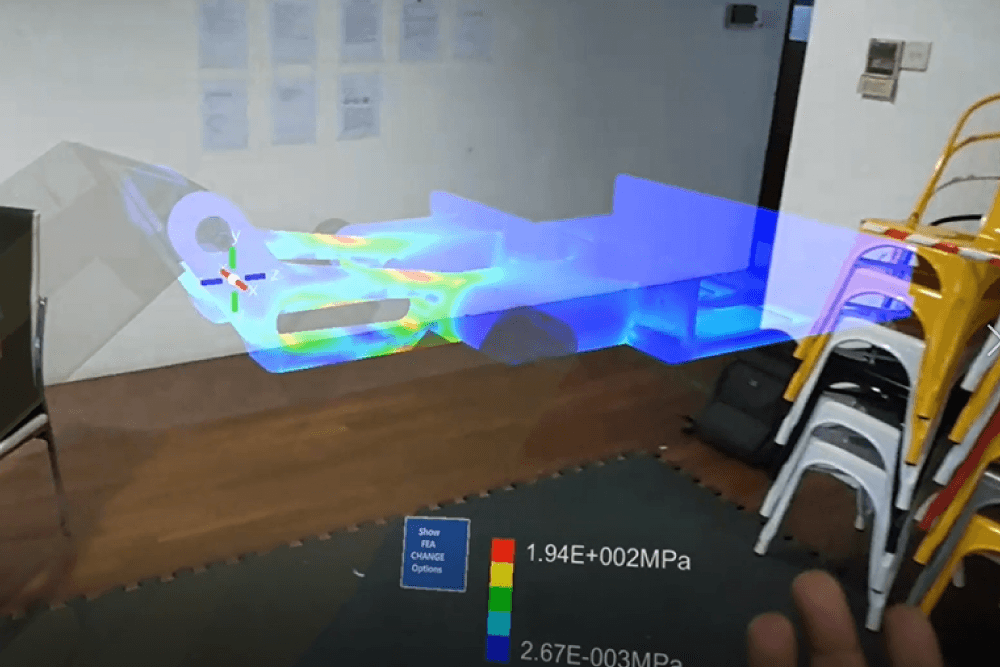

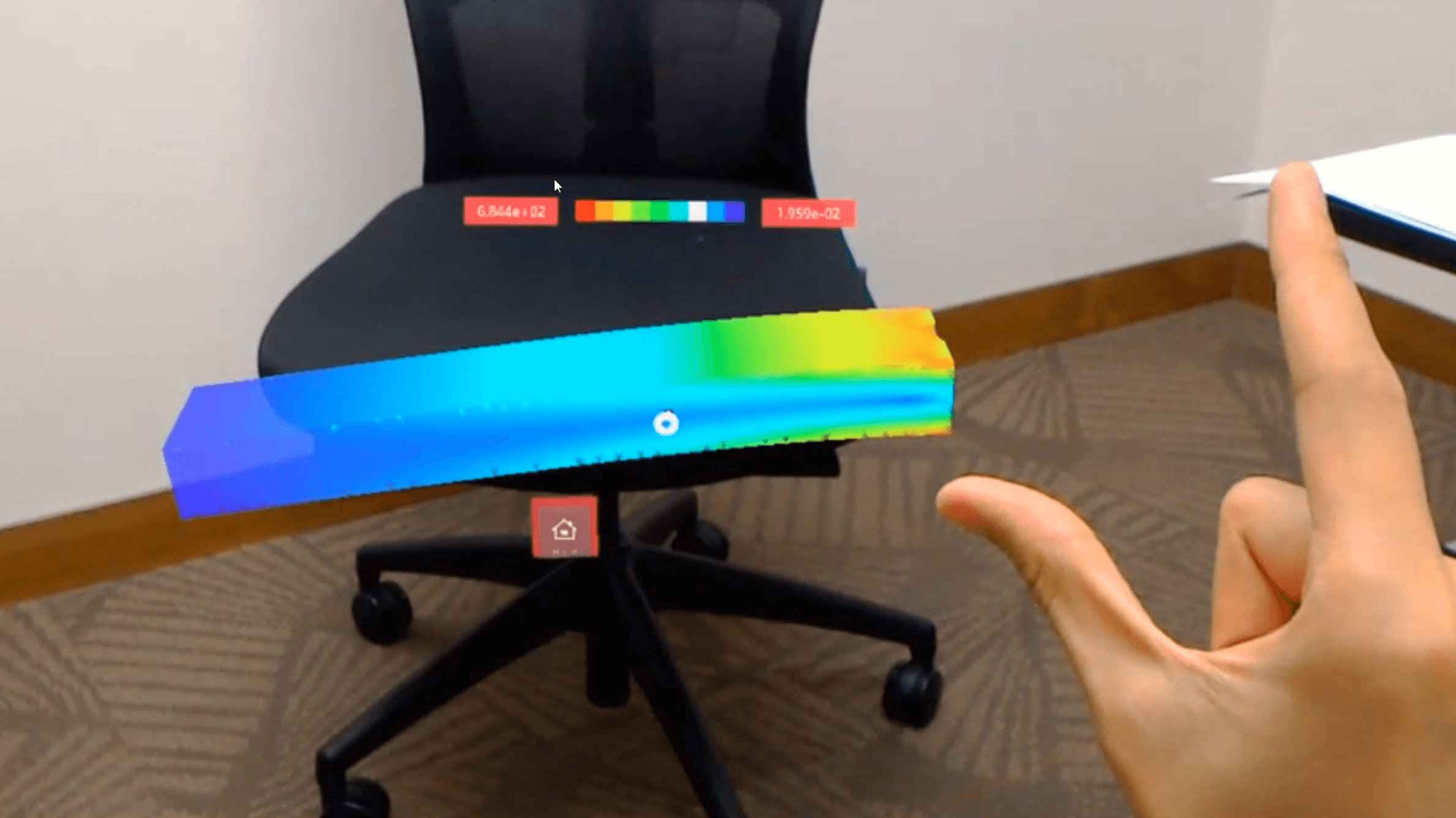

We investigate mixed reality for engineering design reviews so teams can inspect finite element analysis results immersively alongside the modeled geometry. The aim is clearer spatial grounding of stresses and deformations compared with conventional monitor-centric FEA workflows.

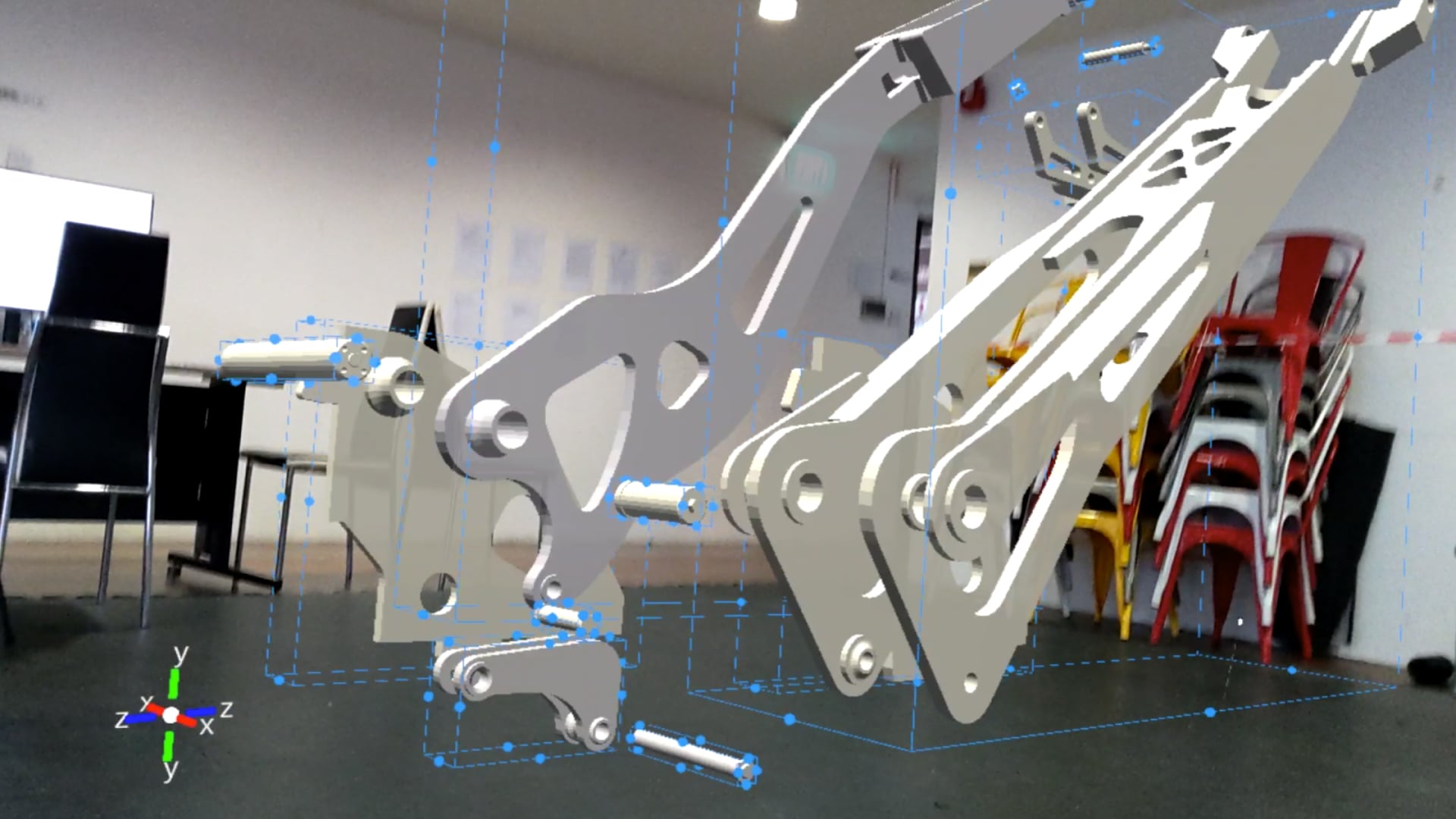

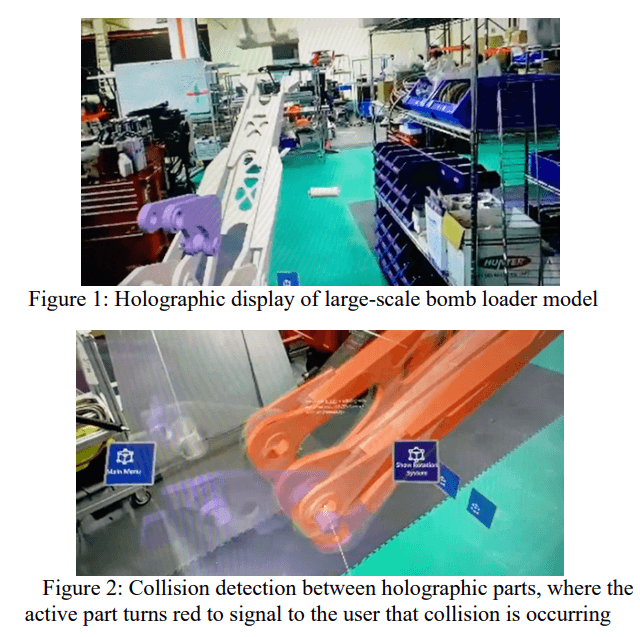

We build mixed reality tooling for design-for-manufacturing-and-assembly so planners can visualize parts and sequencing against real factory context, not only abstract VR scenes. Pilot feedback suggests practitioners find it usable and potentially cost-effective for tightening assembly plans.

We report a preliminary study on whether holographic displays can improve how designers visualize and communicate spatial concepts. Early observations motivate further work on when holograms complement versus complicate established design-review workflows.

We prototype mixed-reality gestures for specifying structural loads directly on 3D models during finite element analysis, bypassing purely mouse-driven dialogs. An early evaluation contrasts point-apply, point-hold, and point-drag variants, with point-hold appearing most usable for engineer-like tasks.